From Wetware to Tilt Brush, How Artists Tested the Limits of Technology in the 2010s

Cécile B. Evans, Jenna Sutela, Jonathan Yeo, Tiffany Funk, Luba Elliott and Anna Ridler appraise the impact of new tools and platforms over the last decade

Cécile B. Evans, Jenna Sutela, Jonathan Yeo, Tiffany Funk, Luba Elliott and Anna Ridler appraise the impact of new tools and platforms over the last decade

It is now a decade since the art critic and philosopher Boris Groys proclaimed a radical shift in our image culture ‘from aesthetics to autopoetics’, which is to say ‘to the production of one’s own public self’. Coinciding exactly with the birth of Instagram, the publication of Groys’s Going Public (2010) affirmed the development of ‘collaborative, democratic, decentralized, [and] de-authorized’ artistic strategies that reflected and embodied our networked selves. Recent years have witnessed a surge in artists’ attempts to utilize and critique developing technologies in ways that problematize their social implications. This has involved experimentation with publicly available platforms, such as Google Tilt Brush, as well as the integration of virtual- and augmented-reality technology into the creation of newly immersive environments. Ever-iterating AI algorithms have also prompted a new generation of artists to consider the creative potential of machine learning – training programs to think for themselves – as well as the problems inherent in such a cession of agency. In order to appraise the impact of these new platforms, I asked a group of artists, curators and theorists, whose work engages with the discourse surrounding art and technology, how they felt such tools were affecting artistic development, both practically and conceptually.

Cécile B. Evans

I’m currently adapting the Industrial Era ballet Giselle (1841) as an eco-feminist thriller for which I’ve been looking towards user-driven platforms, like deep artificial intelligence programs that produce audio-visual effects, and how natural systems (like bacteria, yeast, fungi) mutate and rebel. This comes from an increasing unwillingness to work with corporate platforms or technologies that invariably feed into colonial and patriarchal narratives of dominance and capital. User-based platforms like DeepFaceLab are also problematic, but for the moment remain non-commodified and open-source. There is more potential in subverting these than commercial platforms that have proven they can siphon value from anything. Natural systems can provide blueprints for these subversions, specifically how they respond to threats and thrive on diversity and change.

My fatigue with corporate platforms also extends to a general change in how I approach things. I’m deeply bored by technological narratives about how the universe is an apocalyptic simulation or fantasies of living in a hauntological AI thought experiment. People would (and do) suffer from these ideas, which ignore too many lived realities and perpetuate an evolutionary paralysis. It’s impossible to overlook that these narratives are, again, tied to gatekeeping systemic bias, driven by satiating a capitalist thirst to monopolize whatever next accelerated phase this existence has to offer. There’s nothing ‘interesting’ about that. Technology is thrilling when it can actually benefit large portions of society, rather than reinforce existing structures that are already failing us. I want to imagine strategies that are recalcitrant, mutable, non-binary and illegible – closer to the realities that many people experience every day. Approaches like these have greater potential to push us into a positive phase of evolution than recent attempts to streamline our existence and create an intelligence ‘just like us’.

Jenna Sutela

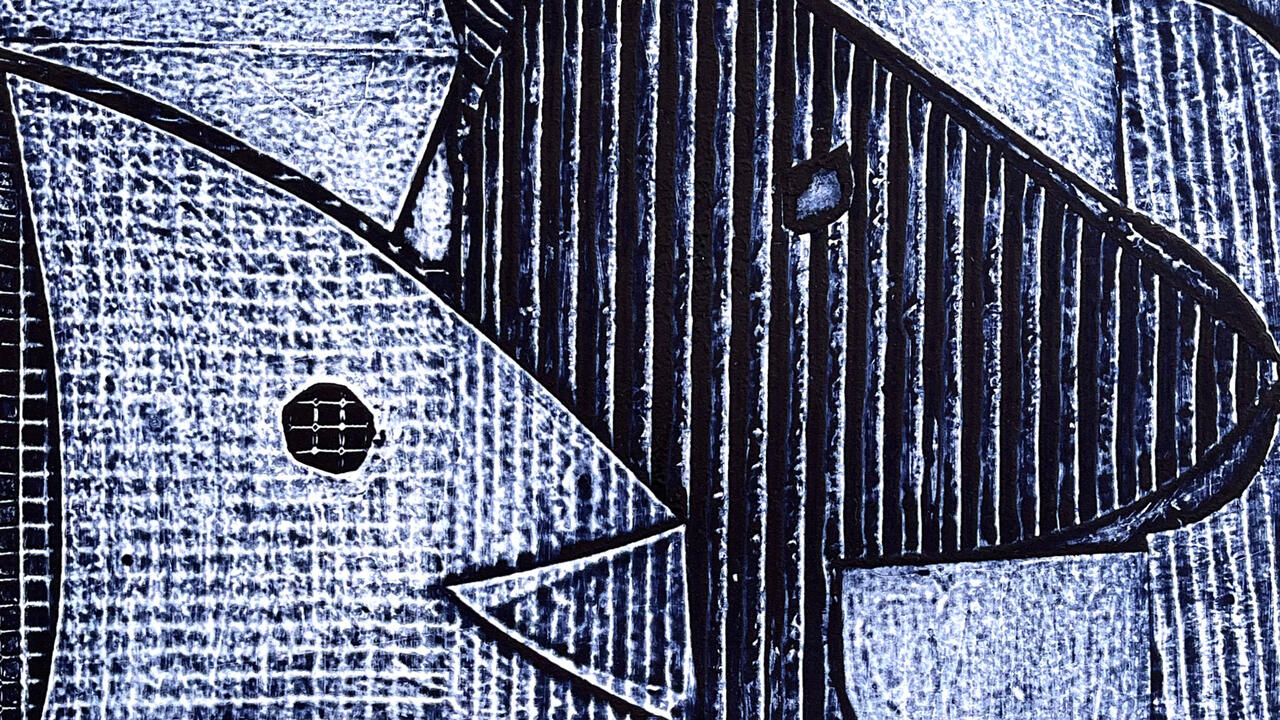

Over the last decade, my exploration of wetware (as opposed to hardware and software) has brought me into contact with Physarum polycephalum (slime mould): the single-celled yet many-headed organism often referred to as a biocomputer. This has led me to a path of biocomputational experimentation, working with ever-simpler forms of life. At the same time, I’ve had the chance to collaborate with intelligent machines and machine learning while engaging both ancient and futuristic living materials in a non-linear way. This has led me to the conclusion that computers should be approached on their own terms, rather than anthropomorphized. The so-called black box problem in machine learning refers to the fact that it is sometimes hard to explain how an AI has come to its conclusions.

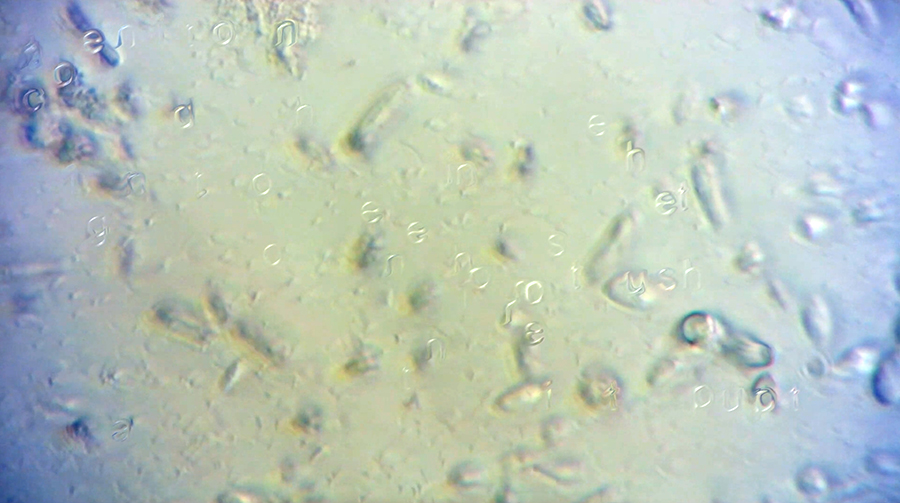

For my project Gut-Machine Poetry (2017), I introduced entropic processes to computing by inserting fermenting foodstuffs (wetware) into the guts of a computer. Changes in living material – the stochastic movement of yeast eating sugar in a kombucha tea ferment – are observed through a microscope, analyzed by custom software and used to generate random numbers that interact with text, jumbling it into a new kind of language. This jumbling algorithm takes after Jumbo, a program that cognitive scientist Douglas Hofstadter developed in 1995 to solve anagrams based on the actions inside a biological cell.

As a decentralized autonomous organism, slime mould has appeared as a collaborator, or a co-performer, in many of my recent works. In the case of Many-Headed Reading (2016), I ingested it before performing a reading in which I imagined its hive-like behaviour ‘programming’ my own. I considered the speech act to be a form of artificial intelligence: the slime serving as a paranoiac-critical agent, helping me make connections where none previously existed, its movement taking me where I needed to go.

Jonathan Yeo

Painting is an old technology that doesn’t need improving, but Google saw the potential for VR to achieve an equivalent in Tilt Brush, through which the same sort of gestural marks can be produced without having to obey the laws of physics. The sculptor Antony Gormley came to my studio, tried Tilt Brush and reached exactly the same conclusion as I did – that it should be used for three-dimensional design. Implying it is a painting tool by calling it a ‘brush’ is very misleading. It’s a way of rapidly prototyping 3D-design, sculpture and architecture without the laborious additional effort of making 2D plans.

The use of Tilt Brush is not as widespread as I thought it would be by now. It will happen, I’m sure, but there’s often a disconnect between the people making the programs and those using them. For me, it was an odd realization that, once the VR headset was removed, the object you had created didn’t exist. Pangolin Editions, who made the bronzes for Damien Hirst’s Treasures from the Wreck of the Unbelievable (2017), are very keen to explore new production methods for traditional artworks. I worked with them on Homage to Paolozzi (Self-Portrait) (2017) – the first sculpture ever designed using VR software – which they cast for me in bronze: a material I deliberately chose for its being as irrefutably solid as you can get.

Tiffany Funk

For those of us raised in cultures where video games are pervasive, it seems only natural that so many talented artists would be interested in a medium that is both interactive and embodied. In particular, those disappointed by the lack of galvanization within the video art and film communities see gaming art to be the radical tool we have needed for some time.

A number of notable works from the past decade not only adeptly critique gaming as a medium but also offer a broader cultural perspective. Many of these projects appropriate traditional media formats and subvert them or use game hardware and mechanics in ways that cleave more closely to conceptual and social practice art forms. Eddo Stern’s Darkgame 4 (2014), for instance, challenges the notion that video games have to be ‘fun’ to spotlight their physical repercussions. Angela Washko’s performance video, We Actually Met in World of Warcraft (2015), represents the culmination of three years of World of Warcraft infiltration, in which the artist confronted other players about the treatment of women within gaming spaces. Not only is the work poignant for the variety of answers she receives, but it also raises questions about online safety. While World of Warcraft is virtual, Washko’s altercations with gamers put her in real-life danger, as was highlighted by the 2014 #Gamergate controversy, which led game developer Zoe Quinn – a victim of severe online harassment and death threats – to flee her home after her address was made public.

Luba Elliott

The past few years have seen a rapid development in AI technologies, which are now capable of recognizing faces and generating highly realistic images. Concurrently, artists have been stress-testing such technologies: generating new still lifes from old drawings and devising ways of fooling facial-recognition systems. While technological progress has always been linked to artistic experimentation, AI has now transcended the tool function to become a fully creative partner: presenting a machine’s understanding of the world; producing stylistic variations on an artist’s work; finding commonalities between radically different art objects.

Recent developments can generally be divided into two approaches. The first – whereby neural network-based algorithms analyze large datasets of images in order to produce new works of art – has led to a focus on the inherent biases of these datasets, for example, the predominance of white males over female and minority groups in public datasets. Artists working with such open-sourced datasets, therefore, run the risk of entrenching old power structures. By contrast, a striking recent example of the second approach is Nonfacial Portraits (2018), by Korean artist duo Shinseungback Kimyonghun, which paired a group of artists with a facial-recognition system in order to produce portraits whose sitters remained undetectable by AI.

Anna Ridler

Artists have long trialled – and tested the limits of – new technologies. Machine learning, which utilizes algorithms and data sets, allows me to interweave ideas and concepts in a way that a more analogue medium wouldn’t. In a sense, machine learning falls within the genealogy of the artist’s workshop: the art starts and ends with me but I’m allowing something else to produce it.

The sphere of art and technology isn’t a static space. I’m currently working with Opera North in Leeds to train a series of GANs – a pair of unsupervised neural networks – on musical scores. Different opera singers are trying to respond to what the GAN has produced, such that it becomes a fluid process of looping and iterating between the performer and the algorithm. The difference between algorithms and data is that the former tend to be authored, whereas data is often anonymized. But every piece of data is a trace of someone’s interactions in the world. This isn’t cold or sterile or algorithmic: it’s a history of people.

Main image: Jenna Sutela, Gut-Machine Poetry, 2017. Courtesy: the artist; photograph: Mikko Gaestel

Luba Elliott is a curator and researcher specializing in artificial intelligence in the creative industries. She has given talks at The Photographers’ Gallery, London, UK; ZKM, Karlsruhe, Germany; and Impakt Festival, Utrecht, the Netherlands. Her recent projects include ART – AI Festival, Leicester, UK; the online gallery aiartonline.com; and NeurIPS Machine Learning for Creativity and Design, Montreal, Canada.

Cécile B. Evans is an American-Belgian artist based in London, UK. Her current exhibition, ‘Amos’ World’, is at 49 Nord 6 Est – Frac Lorraine, Metz, France, until 26 January 2020. Other recent solo exhibitions include: Museum Abteiberg, Monchengladbach, Germany; Tramway, Glasgow, UK; Chateau Shatto, Los Angeles, USA; Museo Madre, Naples, Italy; mumok, Vienna, Austria; Kunsthal Aarhus, Denmark; and Serpentine Galleries, London, UK.

Tiffany Funk is an artist, critical theorist and researcher specializing in emerging media, computer art, video games and performance-art practices. She is editor-in-chief of the Video Game Art (VGA) Reader and co-founding lecturer and academic advisor for IDEAS – an intermedia, theory and practice-based Bachelor of Arts degree at the University of Illinois, Chicago, USA.

Anna Ridler is an artist and researcher who lives and works in London, UK. Her work has been shown at Barbican, London; HeK, Basel, Switzerland; Ars Electronica, Linz, Austria, among many others. She won the Dare Art Prize 2018–19. She is currently nominated for a Design Museum ‘Design of the Year’ for her work on datasets and categorization.

Jenna Sutela is a Finnish artist based in Berlin, Germany. Her audio-visual works, sculptures and performances have been widely shown internationally, including at Guggenheim Bilbao, Spain; Museum of Contemporary Art Tokyo, Japan; and Serpentine Galleries, London, UK. Her work is currently included in the exhibition ‘Mud Muses: A Rant About Technology’ at Moderna Museet, Stockholm, Sweden, and on display at Serpentine Galleries, London, UK, until 12 January.

Jonathan Yeo is a British portrait artist based in London, UK. His sitters have included Sir David Attenborough, Idris Elba, Damien Hirst and Malala Yousafzai. He has had retrospectives at Museum of National History, Frederiksborg, Denmark (2016); Laing Art Gallery, Newcastle, UK (2014–15); and National Portrait Gallery, London (2013). In 2018, Yeo exhibited a series of works at London’s Royal Academy of Arts that included an innovative bronze sculpture made using VR, 3D scanning and 3D printing. He was also named Artist of the Year 2018 by GQ Magazine.